THE GAME: Build Your Inference Rig

You have a budget. You have a workload. Build the machine.

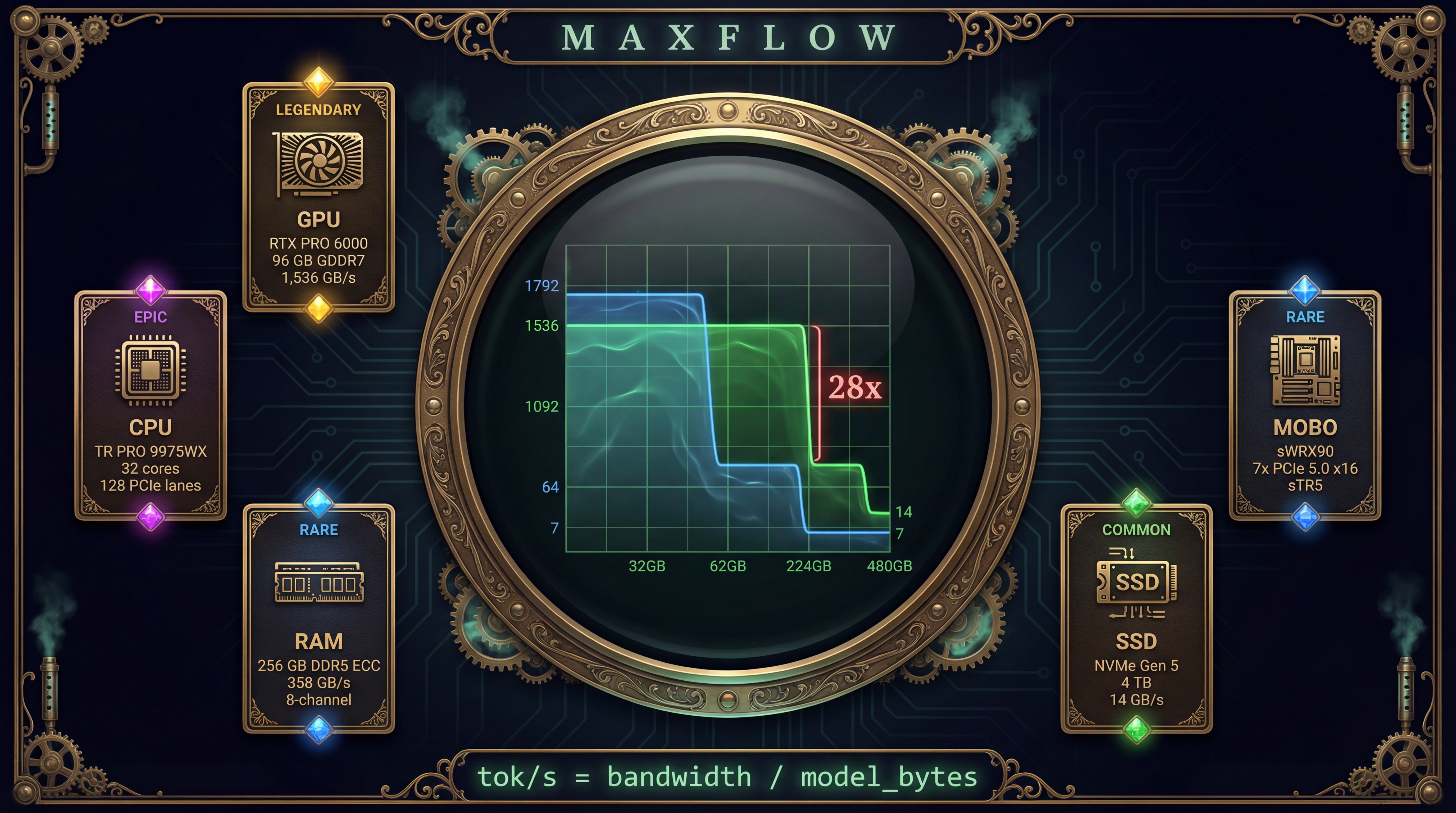

The staircase updates as you pick components. Every choice shifts the cliff—the point where your model overflows VRAM and performance drops 28×. Push the cliff right. Keep the staircase high. The max-flow determines your score.

Scoring Rubric

Flow Score

Largest model at ≥20 tok/s. 8B = entry. 70B-Q4 = competitive. 70B-FP16 = strong. 405B = elite.

Min-Cut

Your bottleneck component. What to upgrade next: VRAM capacity, VRAM bandwidth, PCIe, lanes, or budget.

Efficiency

Performance per dollar. Leaderboard: Budget (<$3K), Prosumer ($3-10K), Workstation ($10-40K), Research ($40K+).

SSD Bonus

Draft GPU + target GPU = speculative decoding. Augmenting path multiplier on effective tok/s.

Component Deck

Pick from these cards. Each shifts the staircase differently.

GPUs — the main pipe

| Card | VRAM | Bandwidth | TDP | Price |

|---|---|---|---|---|

| RTX 4060 Ti | 16 GB | 288 GB/s | 165W | $400 |

| RTX 4090 | 24 GB | 1,008 GB/s | 450W | $1,600 |

| RTX 5090 | 32 GB | 1,792 GB/s | 575W | $2,000 |

| RTX PRO 6000 | 96 GB | ~1,536 GB/s | 300-600W | $9,449 |

| H100 SXM | 80 GB | 3,350 GB/s | 700W | $25,000 |

CPUs — the lanes

| Card | Cores | PCIe Lanes | RAM Ch. | Price |

|---|---|---|---|---|

| i9-14900K | 24 | 20 (5.0) | 2 | $580 |

| Ryzen 9 9950X | 16 | 28 (5.0) | 2 | $550 |

| TR PRO 9975WX | 32 | 128 (5.0) | 8 | $3,924 |

| TR PRO 9995WX | 96 | 128 (5.0) | 8 | $7,469 |

RAM — the overflow pool

| Config | Capacity | Bandwidth | Price |

|---|---|---|---|

| DDR5-5600 2-ch | 192 GB | 90 GB/s | $600 |

| DDR5-5600 ECC 8-ch | 256 GB | 358 GB/s | $5,600 |

| DDR5-5600 ECC 8-ch | 512 GB | 358 GB/s | $11,200 |

Challenge Scenarios

The Freelancer

Budget: $3,000Run 70B Q4 at >10 tok/s. You have $3K. Go.

The Quant

Budget: $15,000Run 70B FP16 inference + QLoRA 70B training. Two missions, one machine.

The Lab

Budget: $50,000Run 405B Q4 at >15 tok/s AND train 8B agents with PPO.

The Endgame

Budget: unlimitedRun 405B FP16 at >20 tok/s. Minimize cost. This is the final boss.

The Staircase Mechanic

Every component shifts the staircase. The game is: make it tall and wide within budget.

| Action | Effect on Staircase |

|---|---|

| Add GPU | Extends top step right (more VRAM → cliff moves) |

| Upgrade GPU | Raises top step (faster bandwidth per GPU) |

| Add RAM | Extends middle step right (more offload capacity) |

| Upgrade CPU | More PCIe lanes = feed more GPUs at full speed |

| Better SSD | Barely moves anything (startup speed only) |

Training Badges

QLoRA Novice

~12 GB VRAM. Any modern GPU.

QLoRA Pro

~40 GB. Needs PRO 6000 or 2× consumer GPUs.

Agent Smith

~160 GB. Policy + ref + value + reward. 2× PRO 6000.

Cloud Cutter

~560 GB. Nobody does this locally. Yet.